When we talk to potential customers here at Salv, many of them quickly ask about Salv’s machine-learning (ML) and Artificial Intelligence (AI) capabilities. It makes sense. It’s easy to assume, with incredible new technologies and data modelling like ML/AI, that using them will bring a company’s Anti-money laundering (AML) capabilities to a whole new level. Which means every company should be using them in 2020, right?

Not quite.

After one of those many conversations we had with those in the industry, we set out to write down a few of our thoughts — but, because the topic is so critical, it’s evolved into something much, much longer.

At Salv, we’re home to a bunch of data scientists and maths nerds, myself included. Which is why it may come as a surprise to find that we made a conscious decision not to build ML/AI into our product. Especially considering many in our team have built these systems in high-performance environments like Skype, TransferWise, and Guardtime. So we’re confident we know what we’re doing. But, at the end of the day, not using ML/AI is what we think is the best direction for our customers.

Allow us to explain. But, first, be prepared, it’s an 18 minute read. So grab a chair and let’s dive in. Or, rather, drive in.

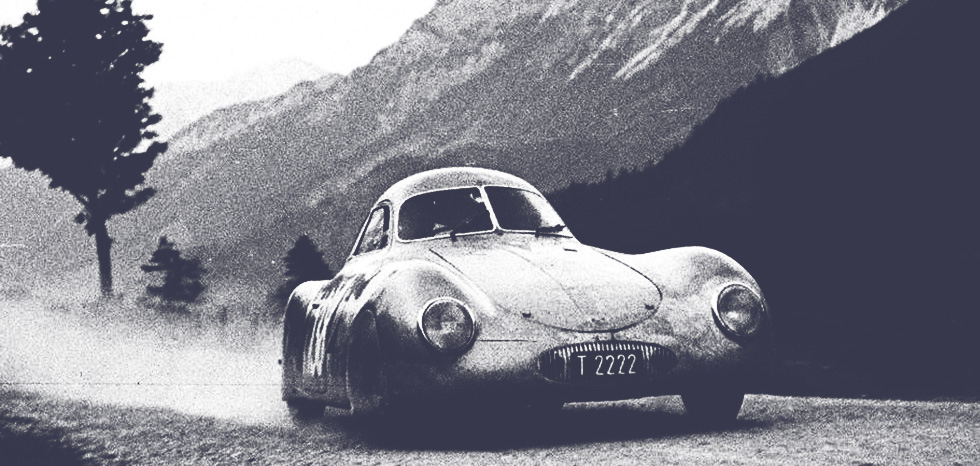

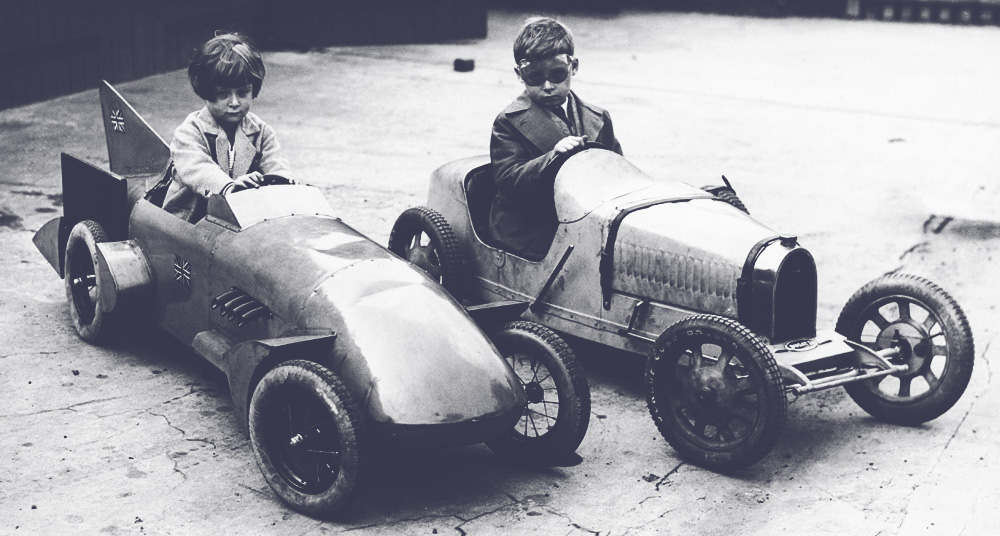

Machine learning is a Porsche, rules are a Toyota

Imagine your son has just turned 18 and recently passed his driving exam. Like many parents, you decide to buy him a car. Assuming money isn’t much of an issue for you, would you buy him a Porsche or a Toyota?

Porsches are top-of-the-line, right? Fast. Streamlined. Elegant, even. There’s no doubt your son would love it. And your neighbours would definitely be jealous — everyone would want to be the cool parent you are.

On the other hand, if you buy him a Toyota — new or used — then hopefully you also have a garage to hide it in.

But let’s boil this down to logic. Which car is most suitable for your son?

The Porsche, besides being ridiculously fast, is also expensive. Even if money is no object, the price tag will still probably sting. And it’s so powerful that it requires specialised training and years of driving experience to manage. If something breaks, finding a shop that can fix it will likely be a challenge. Yes, it’s the perfect car if you’re driving at high speeds — but I’m guessing, as a parent, you hope your son won’t be indulging in that feature. At the end of the day, the Porsche is high risk for your son’s safety, your wallet, and probably your future relationship if he accidentally wrecks the car but makes it out alive.

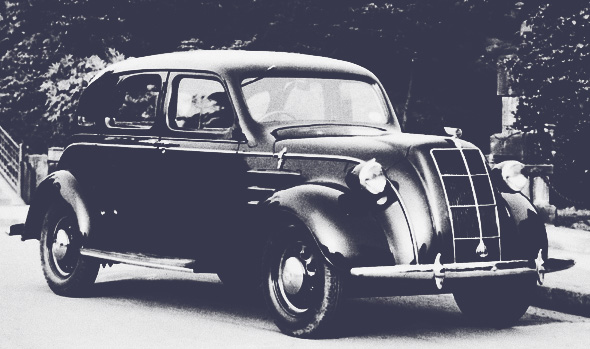

The Toyota, on the other hand, is reliable, honest, and hard-working. True, it doesn’t go nearly as fast as the Porsche. But that should be a plus if we’re talking about your 18-year-old son. Add on the bonus that it comes standard with bluetooth and tons of safety features. Sure, if you buy a new one today, maybe all you can change are the lightbulbs, but at least nearly any garage can fix it if something goes wrong.

AML is a bit like your 18-year-old son

If your son has been driving go-carts competitively since he was 6, then maybe a Porsche is the better option. The same goes for banks and fintechs. The perfect data environment in addition to a team with the needed skill sets are pretty rare in financial institutions of today. Take this quote on just data wrangling alone:

“A model is only as good as its inputs, and firms must first corral and cleanse the various internal and external data inputs. This is often one of the most time-consuming aspects of a machine learning platform deployment. One large bank executive says it took his FI almost a year to get the requisite info from its core banking platform and other internal sources and cleanse it. Another executive says that for any new modelling effort, his team typically spends 80% of its time on data wrangling and 20% on the actual modelling effort.” -AIM Evaluation: Fraud and AML Machine Learning Platform Vendors, March 2019, Aite

The good news is that if you, your team, and your business are lucky enough to have the perfect ecosystem, then AI/ML will work wonders for you.

But, for most of us, that isn’t a reality, and that’s why we’d go with the Toyota. AML scandals are the car accidents of the corporate world. Sometimes you’re lucky, but sometimes your very existence is threatened.

An easy way to understand it is that ML/AI amplifies or leverages whatever is good or bad in your business. If you have tons of great data, great processes, and a strong team, then ML/AI will allow your team to do what were previously impossible tasks. But, if your data is poor, your processes are inconsistent, or your team is unprepared — then ML/AI approaches will amplify that. Unfortunately, it means that your big problems will likely become much, much bigger. It’s the same as with a Porsche — good drivers are more likely to become even better. But poor drivers are more likely to end up in the ditch.

The Toyota equivalent for AML

The Toyota equivalent for AML is rules-based monitoring. Because it gives most of us the control that we desperately need.

Yes, it feels way more clunky.

Yes, it feels way less cool.

But it works.

And you’ll have the visibility that your financial institution is going to want to have. Today, it’s more important than ever that MLROs are absolutely certain they understand what their systems are doing. We’ve seen in this current climate, that even if companies get it a little wrong, regulators are far less lenient.

The danger of machine learning for AML

We’ve consulted for small, medium, and large financial companies all over Europe. A number of them were using ML. And, well, with most of them red flags surfaced immediately.

Let’s use one real company and, for the sake of this example, say that they were in the automotive industry. They used some really cool ML models that proposed that every lime green VW Bug from 2003 that had manual window cranks and a sun roof was suspicious. When they looked deeper, it turned out that in 99% of the cases, they were right! However, when we came in, we immediately noticed that while they were right on this model, these particular VW Bugs comprised just a teeny teeny slice of their flows. That left a whole lot of other cars they weren’t checking at all. Which meant that, in their case, a lot of criminals got through scot-free.

In another example, one company kept their rules-based monitoring but started experimenting with ML in house. Suddenly it was only alerting customers who were buying fake goods from China. And in another instance it narrowed down to immigrant workers in Australia who were sending money home to South East Asia. When that happened, it was important that the team would first notice the issue, and then adjust it. That took a lot of work and, in the end, it took them far more time to babysit their models than it was worth.

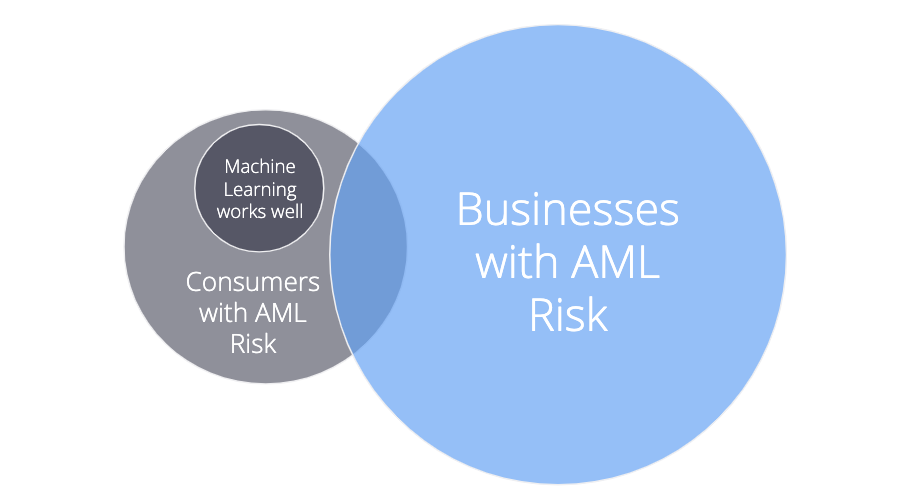

Where machine learning for AML shines

As I mentioned before, it’s not all doom and gloom. Machine learning approaches are effective when your business is stable, mature, and large. For example, if you’ve got a consumer business, a monoline product like a debit card, several million customers, have been operating in the same countries for years, and your AML team is experienced, then Machine Learning can definitely help. It’ll help with the most of your tedious tasks first — sorting through millions of transactions and finding the ones that are most obviously suspicious.

But if your consumer business branches out into the SMB, you’ll find that the risks are quite different — even though there are areas of overlap. The input data is totally different (UBOs, company structures, payment behaviours, products) and the risks are of a totally different nature.

Honestly, ML/AI approaches aren’t helpful until the Compliance/AML team even understands what’s going on. They need to understand the new criminal patterns, the new datasets, the new normal customer behaviour, and do this for a while, before even thinking about building their first ML/AI training set.

Why AML isn’t ready for machine learning yet

It’s poorly understood

The main challenge is that, unfortunately, machine learning is poorly understood by most people, including data scientists. Sure, you can set up an advanced ML model in 5 lines of python code, many of us in Salv have done exactly that many times. But the problem is that you’d have no idea why the results are what they are. This is common — and it’s scary when it’s applied to AML. The reputation of entire financial institutions rests on AML controls.

You need a lot of data

You really do need a lot of data to do machine learning well. If you have fewer than 500,000 customers, for example, then it’s just not going to work. There isn’t enough training data. Supervised learning models are the most effective option, but you’ll need to have quite good detection mechanisms already in place to provide enough input to the model. Which means good ML can only follow good rule-based monitoring.

Money Laundering cases are, fortunately, relatively rare. Typically, they’re just 0.1-3% of transactions flagged and far fewer reported. Just like our earlier example with the lime green VW Bugs, the model zoomed in on a particular type but missed the big picture. All of that came from highly unbalanced training data — in part because the company just didn’t have enough data to start. Unfortunately, this will always be a risk for ML in AML. Sure, there are ways around it, but you’ll need to have extremely capable data scientists who thoroughly understand your product and your AML domain so they can quickly spot the cases where your model suddenly over-calibrates for one type of behaviour and completely neglects the larger picture.

Questions you should ask before using ML/AI for AML

If it helps, we tried to put together key questions that senior compliance team members should ask when deciding how to use ML/AI . And how typical Machine Learning and Rule-based approaches could answer.

Q: Do you have enough data?

- Machine Learning: It depends. If you have at least 500K+ customers, probably yes. Less than 500K customers? Then no.

- Rule-based approach: Yes, for sure. Rules work from the very first customer.

Q: Is your data high enough quality?

- Machine Learning: It depends. Data quality standards are extremely high. So if you’ve built up a solid rule-based system, layered on mature processes in addition to having a deep understanding of your customer base, market, and product — then ML can work. All of this data then has to go into a ‘feature extraction’ engine to ensure models can use it. You’ll typically need to extract 100-200 features to start with.

- Rule-based approach: Likely yes. Rules aren’t fussy. Rules will work even if you just have a list of customers, transactions and 10 fields in each table.

Q: Can you manage new products or markets from their launch? For instance, you start to offer an SMB product, when you’re a consumer-only company.

- Machine Learning: No. There isn’t sufficient data, and there won’t be for years.

- Rule-based approach: Likely yes. Rules work from the first transaction.

Q: Do you have the team needed to set up and monitor the system?

- Machine Learning: It depends. Can you attract someone among the top 5% of data scientists to come work for you, in your office, on AML in your team? If your vendor is supplying the data scientist capability, can you evaluate whether they’re in top 5%? Can you teach them the specifics of your business so they can build great AML models?

- Rule-based approach: Likely yes. Rules are human readable. AML specialists combined with someone a bit more technical (an analyst, not data scientist) can implement, oversee, and rapidly improve the rule set. It depends on the system, but with Salv, you can manage this completely within your own team without needing support even from your internal IT team, let alone a vendor’s.

Q: Can you explain to your regulator or partner institution what your risk policies are?

- Machine Learning: It’s difficult. The policies are essentially model weights. These are barely comprehensible to data scientists, and nearly impossible for you to convey to regulators.

- Rule-based approach: Quite easy. It maps directly to risk policy documents. Assuming the proper audit controls are in place, the precise code implementation can be exported to a spreadsheet.

Q: If an AML specialist tells you a certain pattern is likely to result in a SAR, how easily can you stop it automatically?

- Machine Learning: It’s difficult. This requires feature extraction, modeling, testing, etc.

- Rule-based approach: It’s easy. As long as the rule-builder is powerful enough. Just modify a threshold or add a new rule configuration.

Q: Can you explain what your risk policies were on a specific date 1 year ago, and how those policies have changed vs today?

- Machine Learning: Difficult. See the previous answer. And, in addition, the underlying model data likely isn’t archived and can’t be reset to some arbitrary historical point.

- Rule-based approach: Easy. If the system logs all risk policy changes.

Q: Can you check for specific scenarios that your regulator or correspondent bank is expecting?

- Machine Learning: Difficult. You’ll need to hack the ML models in a way that you feed in artificial training data that would come from your partner’s expected “alert-worthy” scenarios.

- Rule-based approach: Moderate. You can type in the exact rule they expect you to run. But most likely those generic “expected” rules will create a ton of false positive alerts that you’ll need to handle manually — IF you don’t have an automated alert resolver in place.

At Salv, many of us are huge fans of ML/AI and have used it ourselves, including in the AML domain because, as we mentioned, there are use cases that absolutely make sense for ML/AI. We wish it weren’t true, but we have seen that, if not used carefully by extremely talented AML Data Scientists, then significant risks can be introduced that you’ll discover far too late. If you do manage to get a ML/AI system set up, it will appear to work flawlessly. Until it doesn’t.

The dangers of unsupervised ML/AI modelling

Vendors offering ML/AI often help build and maintain your models. That’s great, but can you trust that these models are right for your company? These companies are doing their best with what information they have, but there are a few things to keep in mind.

If their models screw up, these companies don’t have the financial liability you do

They won’t be the ones who suffer the reputational damage if the financial institution gets caught up in an AML scandal. This lack of downside will likely push them to take risks the MLRO wouldn’t dare to. To mitigate, you’ll ideally hire top data scientists directly into your AML team to align risk-taking incentives, and then double down on governance.

They don’t know your business like you do

They’re not talking with your customers and your unique set of criminals daily. They don’t know your product loopholes. They don’t know your risk appetite. They’re just analysing data. Though they’re probably incredibly smart, they’re still missing enormous context for making decisions. To mitigate this, you’ll need to build a strong partnership. Your financial institution will need to commit ample time building up your vendor’s data scientists’ knowledge.

The best system for most organizations in 2020

As a data lover myself, it pains me to say so, but I firmly believe that the AML space just isn’t ready yet for ML/AI.

Sure, I think that it can help some, as long as you have a team who knows both ML/AI, the AML domain, and your products really really well. And if you use it in small doses with close supervision. But it’s not an end of itself. And, today, with the vast experience we’ve had looking at the intimate details of so many company’s AML, the system best for most companies out there is:

1. Rule based

Many, narrowly defined rules are honestly best. If your rules are too broad, then your team gets killed with pointless false positives. So they’ll need to be narrowly defined. But they’re dead easy for anyone in the compliance team, partner institutions, customer support or regulators to understand.

2. Fully controlled and understood by the compliance team

AML risk decisions are far too important to be outsourced to external vendors. Vendors can — and should — help with technology, but the risk decisions have to be made by the MLRO and their team. The team is smart because they’re the ones deeply analyzing suspicious behaviour all day long — so the tools need to be able to incorporate that new knowledge instantly into narrowly defined automated rules.

It may not surprise you to find that this is exactly what we’ve focused on building at Salv.

Will Salv ever use ML/AI?

We’ll use ML/AI when our customers are ready for it. We’ll use it when we’re confident we can introduce it and have the compliance team themselves fully manage it.

As we said from the start, we love ML/AI and many in our team have built these systems in production in high performance environments like Skype, TransferWise, and Guardtime. We’re confident that we know what we’re doing.

There are AML areas that are safer for ML/AI approaches and we’re implementing those first. For instance, in the sanctions world, we’re already far down the path of using ML to automate the repetitive, obvious false positives. We’ll soon launch techniques for suggesting new rules or tweaks to thresholds that will take advantage of 80% of the benefit of ML/AI, yet still allow the compliance team to stay firmly in the driver’s seat.

The regulatory environment of today is built for Toyotas

Getting back to the Porsche vs Toyota dilemma — what kind of roads do we usually drive on? Are the roads straight, clearly defined, and free of obstacles so we can drive as fast as we can to fight criminals? Then this is great for a Porsche. Or are there unexpected roadblocks, signs, detours, and careless drivers all around us? On top of that, do we have one goal — beat criminals! — or many? Then Toyota is likely the better choice because, from my many conversations with those in compliance over the years, no one has ever told me that risk management is simple, fast, and carefree.

Maybe you’re getting depressed thinking you’re going to have to revert to old technology. But let’s think about modern Toyotas for a moment. They’re brimming with technology built to keep you safe and in control, yet still comfortable. It’s almost a farce to liken them to cars built in the 80’s and 90’s.

And that’s similar to many large, legacy AML technology providers. Sure, a lot of them focus on rule-based systems like Salv. In fact, some of them even add on AI or ML to show they have adapted — but that’s a bit like putting an overly powerful 400hp Porsche engine into a rusty, 30-year-old Corolla. It’s not very effective.

Rules-based systems built today, and rules-based systems built 20-30 years ago are worlds apart. Legacy providers didn’t build for navigating the obstacles of today’s environments. Salv did. We can even see some of the evidence as we all watch helplessly as bank after bank, often using legacy providers, are being hit with AML scandals. They just weren’t built to keep up with the fast-paced criminals of today. Salv was.

A mere 10 blunt rules created by a vendor when it was implemented in a bank have no hope of fighting today’s organized crime. Yes, ML/AI approaches can be fast, the fastest even, but, there are still so many challenges. I still firmly believe that many narrowly-defined rules, encoded with tons of compliance intelligence from the humans closest to your product and the criminals using your system is what works best in today’s compliance.

Okay, I’ve taken up a lot of your time, but one final thought on innovation in the AML space.

There are safer innovative technologies that can help

In the summer of 2019, Salv, in partnership with Sharemind Cybernetica, built an advanced piece of kit — as a part of the Financial Conduct Authority’s (FCA) 2019 Hackathon. It allows financial companies to anonymously and safely share data between themselves in the interest of detecting money laundering. The system is GDPR-compliant, and there’s some incredibly groundbreaking cryptographic technology behind it that means data becomes impossible to leak and trace.

As I write this, we’re in the final phases of signing our first large trial customers. And, as you can already tell, this will be part of a whole new era of crime fighting.

So to wrap up, Porsches are cool — and so are ML/AI systems. Toyotas are boring — and so are rules. But the decision of which is better for you, right now, depends on your company. And your regulatory roads.

Enjoy the drive.

- Machine learning is a Porsche, rules are a Toyota

- AML is a bit like your 18-year-old son

- The Toyota equivalent for AML

- The danger of machine learning for AML

- Where machine learning for AML shines

- Why AML isn’t ready for machine learning yet

- Questions you should ask before using ML/AI for AML

- The dangers of unsupervised ML/AI modelling

- The best system for most organizations in 2020

- Will Salv ever use ML/AI?

- The regulatory environment of today is built for Toyotas

- There are safer innovative technologies that can help